OpenAI integration

The assistant was shaped using JFK speeches, interviews, and secondary sources to keep responses historically grounded.

Multimodal AI Project

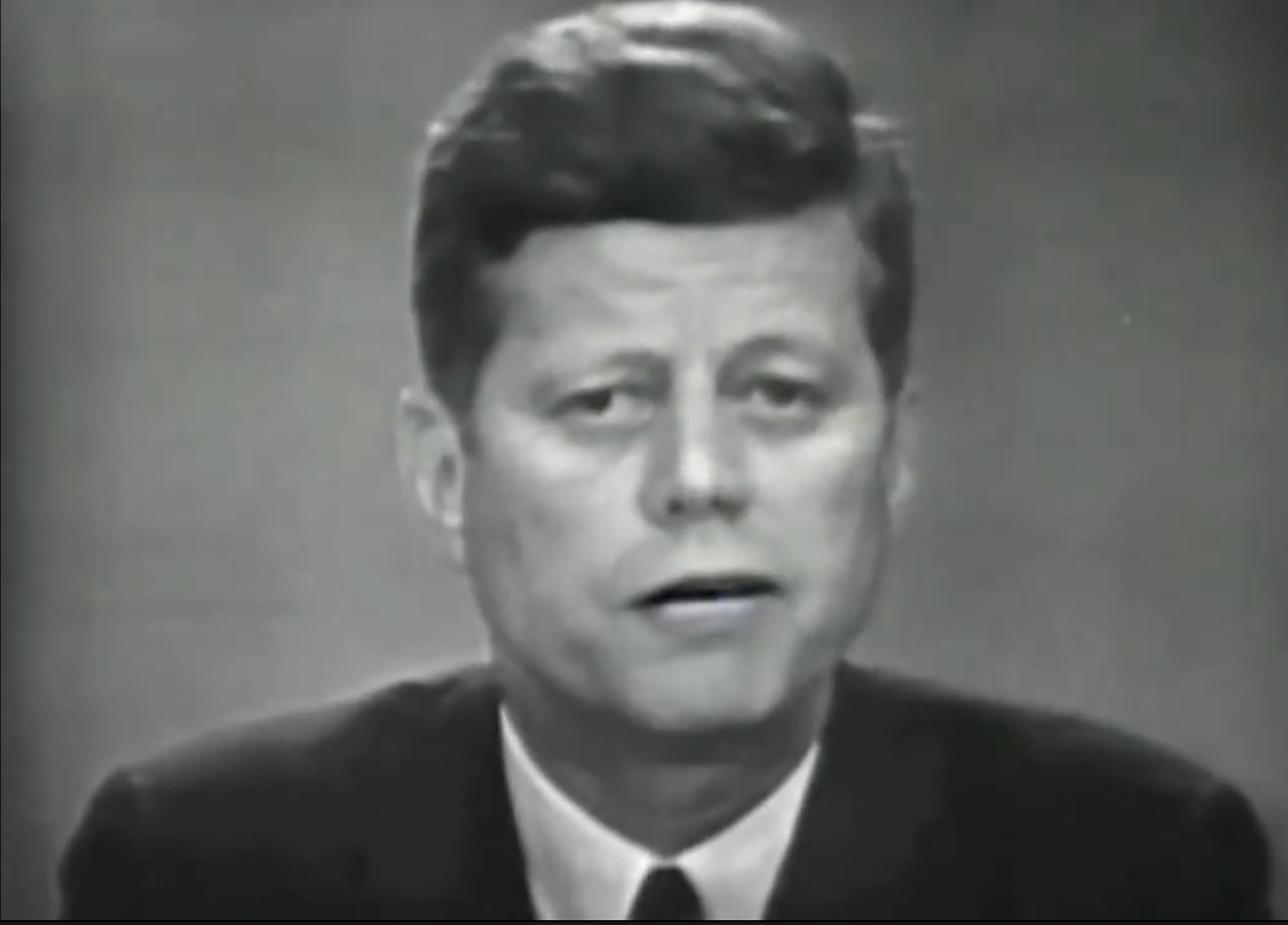

A proof-of-concept multimodal AI experience that explored how a historical figure could be represented through LLM behavior, voice synthesis, and animated visual presentation.

The project explores how language models, voice synthesis, and avatar presentation can be combined into a more convincing historical interaction experience.

Demo Preview

The preview links to footage showing the voice, avatar, and language-model pieces working together.

System Stack

The system combines OpenAI-driven personality emulation, ElevenLabs voice synthesis, and a digital portrait to create a more immersive historical interaction than a text-only chatbot.

The assistant was shaped using JFK speeches, interviews, and secondary sources to keep responses historically grounded.

ElevenLabs was used to generate speech output that matched the chosen historical figure's recognizable voice.

A digital portrait was used to add visual presence, expression, and stronger multimodal immersion.

Challenges and impact

Project Links

Demo footage and the original concept pitch for the project.